Servana

AI-Powered Industrial Support Platform for Reducing Equipment Downtime

What is Servana?

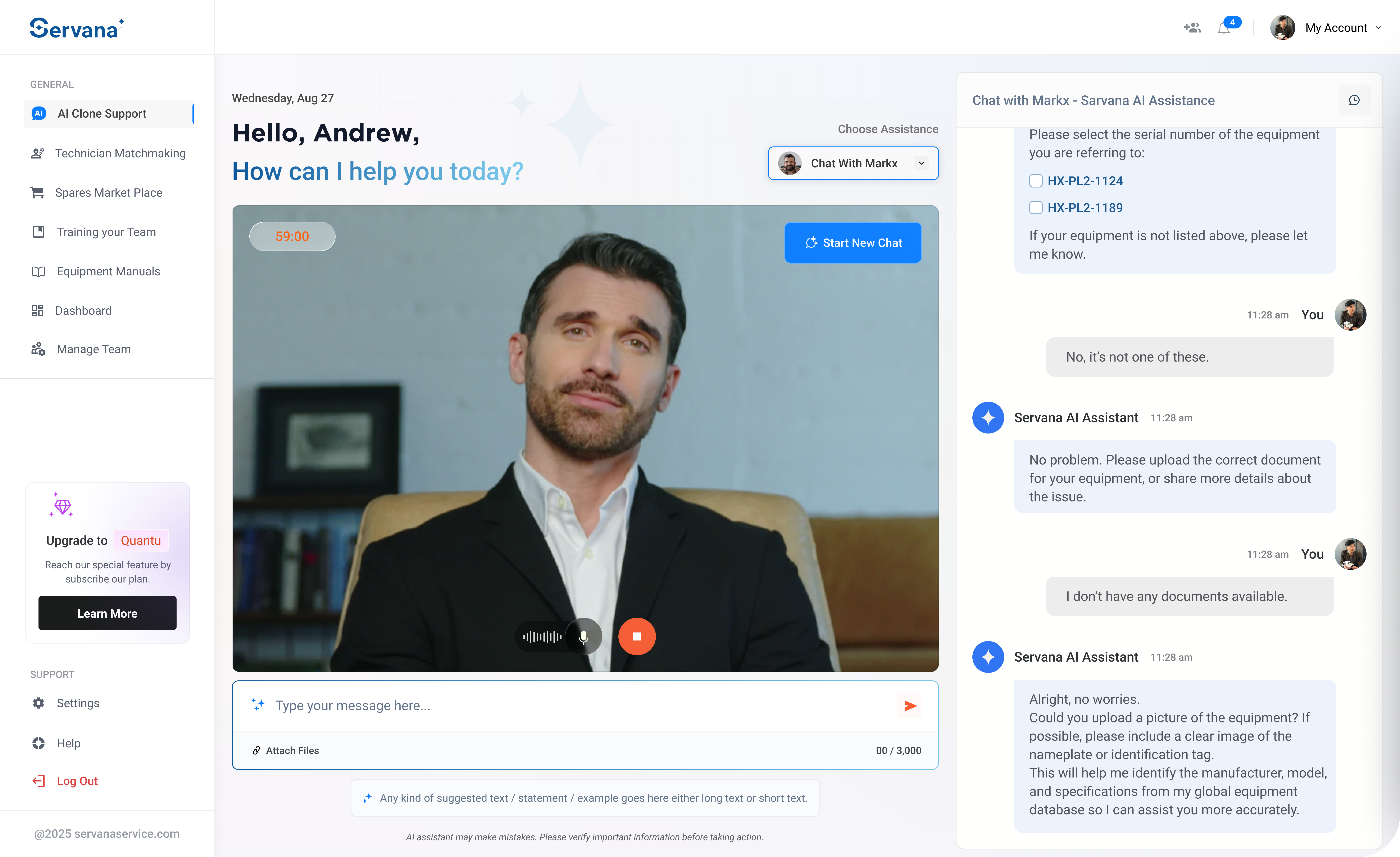

Servana is an AI-powered industrial support platform designed to reduce equipment downtime and improve operational efficiency. It operates in asset-heavy industrial environments where equipment failures directly impact productivity and cost. The platform targets ecosystems involving operators, technicians, spare-part sellers, and administrators who currently rely on fragmented and manual support processes. Operators raise incidents using text, voice, or images. AI analyzes and classifies issues, retrieves knowledge from manuals and historical data, and resolves issues via AI guidance or technician escalation. All interactions continuously improve system intelligence.

Fragmented industrial support costs time and money

Industrial operators face repeated challenges that lead to increased operational costs and inconsistent service quality. There is no centralized equipment knowledge, and coordination between operators, technicians, and sellers is manual and inefficient.

High Equipment Downtime

Equipment failures directly impact productivity and cost, with delayed access to qualified technicians extending downtime further.

Manual Troubleshooting

Operators rely on manual communication and troubleshooting with no AI-assisted diagnostics or centralized knowledge base.

Fragmented Coordination

No unified platform connecting operators, technicians, spare-part sellers, and administrators — leading to inefficient workflows and repeated issues.

Servana follows a modular architecture with a web-based frontend for all roles, secure backend services, an AI orchestration layer, and centralized vector-based knowledge store.

AI Intelligence Layer

Query classification, context enrichment, retrieval from vectorized manuals and documents, response generation using LLMs, and continuous learning from feedback loops — ensuring faster and more accurate resolutions over time.

Platform Backend

Secure backend services with JWT authentication, role-based access control, secure API routing, HTTPS/TLS encryption, and metadata-based isolation. Global and private knowledge are strictly separated to prevent data leakage.

Role-Based Modules

Four distinct modules — Operator (AI support, manuals, incidents), Technician (availability, job lifecycle), Seller/OEM (catalog, orders), and Admin (user management, dashboards) — each with tailored workflows and permissions.

End-to-End Flow

Layered Architecture

Where the hard problems lived

AI Accuracy in Early Iterations

Tuning AI responses for industrial-specific troubleshooting required iterative quality improvements across sprint cycles to achieve reliable diagnostics.

Multi-Role Workflow Coordination

Coordinating workflows across four distinct roles (operator, technician, seller, admin) with different permissions and data access requirements.

Data Isolation in Shared Knowledge Base

Ensuring strict metadata-based isolation within a shared vector knowledge base to prevent data leakage between organizations while maintaining search performance.